New interface technology that understands and provides one’s feelings and emotions on its own has been developed. It is an AI (Artificial Intelligence) system that recognizes one’s feelings and emotions on its own without having to have one to talk or express about his or her feelings.

Huge amount of changes is expected on entire societies when this technology is commercialized. Not only is this technology going to develop psychiatric industries including psychological treatments but it can also be used to develop variety of services that maximize level of satisfaction according to one’s feelings and emotions.

KAIST (Korea Advanced Institute of Science & Technology, President Shin Sung-chul) announced on the 12th at a research team led by Professor Cho Sung-ho of Computer Science Department has developed a ‘system that understands human emotions’ that analyzes body signals through Deep Learning technology and understands what kind of emotions and feelings one currently is having.

This system detects electroencephalogram (EEG), which occurs from a frontal lube, through a headphone-like body signal sensor and detects body signals by attaching a heart rate sensor to an earlobe where blood is flowing.

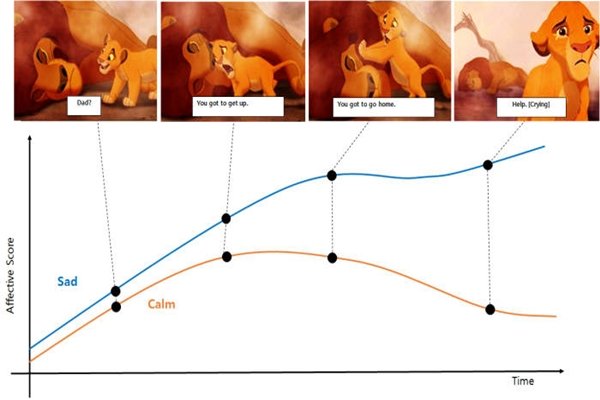

System then divides body signals that are detected into 9 stages of causes of emotions or excited state and analyzes them through Deep Learning Technology. It then divides happiness, excitement, joy, tranquility, sadness, boredom, sleepiness, anger, and irritation into 12 types of emotions that are defined scientifically.

By considering the fact that many feelings can be felt at the same time since human’s emotional system is complicated, research team has developed this system so that it will draw the strongest feeling that has the greatest influence. While research team applies penalty points to its system if the amount of difference in signals of emotions is not too big, it also improves performance of this system by adding its own function to it.

Because this technology is still under a testing stage, there are many more technologies and tasks that need to be added in order to commercialize this technology. However it is expected that there will be enormous amount of ripple effects when research team succeeds in commercializing this technology.

First of all, this technology can accurately understand one’s feelings and maximize effects of treatment by being used for psychological treatment and correction of behavior development. Also because it can understand one’s feelings, which change every moment, in real-time, it can be used to provide personalized services such as playing music according to his or her current feeling or providing a warm cup of tea.

It can also be used to carry communications more smoothly as it can accurately understand one’s feelings and emotions.

“We have developed a technology that can understand feelings and emotions more effectively by learning and analyzing body signals through Deep Learning technology.” said Professor Cho Sung-ho. “It is an original technology that can be applied to variety of fields and contribute in improving quality of human life.”

Staff Reporter Kim, Youngjoon | kyj85@etnews.com